English speaker here.

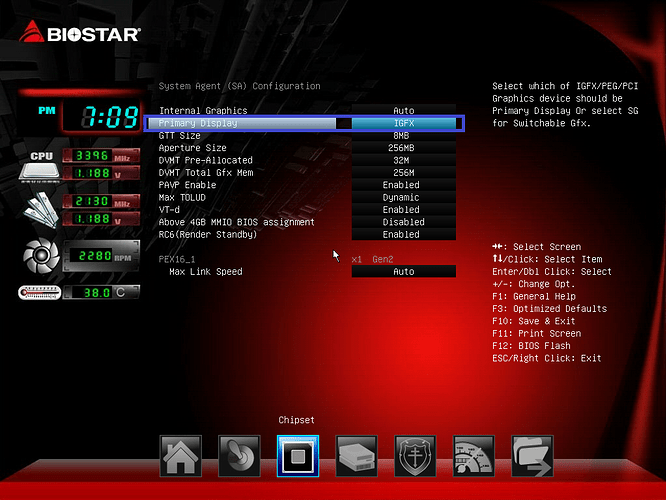

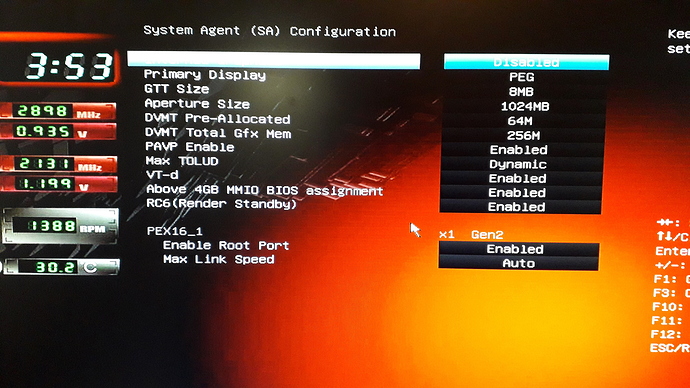

Followed all your BIOS configuration steps (primary GPU set to use PEG/x16 slot, PCI-E speed set to Gen2, CSM enabled for Other OS installation, unneeded devices disabled, etc.).

- All GPUs are brand new AMD RX-570 and RX-580 (not modded), and risers are working.

I am using hive-flasher to deploy a large number of GPUs on Biostar TB250-BTC PRO motherboards (upgraded to latest BIOS version).

Here are the issues:

-

First of all, the instructions for FARM_HASH usage (on this forum) and hive-flasher (on the GitHub page) are inaccurate and inconsistent. You need to update your instructions on both pages for consistency. From trial and error, I discovered that one must fill out BOTH the “rig-config-example.txt” and “rig.conf” files providing the FARM_HASH and password. hive-flasher will not let you proceed unless FARM_HASH and password are filled out in rig-config-example.txt, and HIVE will not properly add the rig to the web dashboard without the rig.conf file.

-

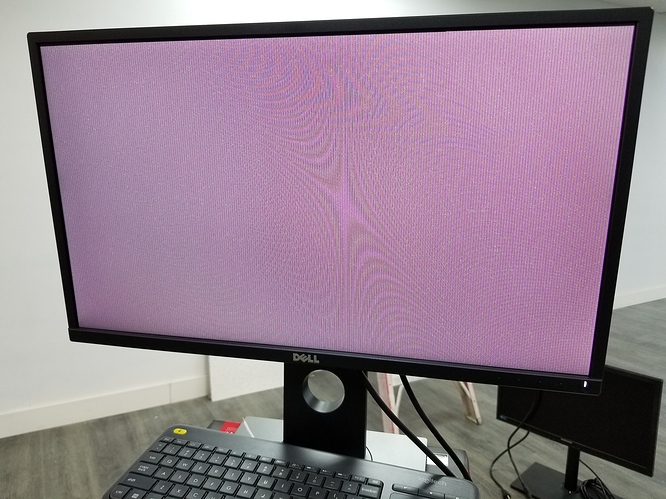

Second issue: Unable to install the image when motherboard is set to use PEG/x16 slot for monitor. It tries to boot into the USB and always ends up with a frozen screen with white noise (but will install fine if motherboard is set to iGFX or PCI). Here is a photo:

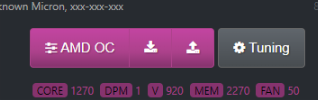

- Third issue… As another user already said before: The rig does not always boot into GUI with more than 6 GPUs connected (if it’s 8, 10, or 12 GPUs) - the rig will not boot into GUI or connected monitor. Another user has posted a photo of this issue, and it looks like this (black screen with blinking cursor):

- Fourth and final issue! ALL of the GPUs report the following error: Invalid PCI ROM header signature: expecting 0xaa55, got 0xffff

Can anyone please educate me on what might be going wrong? My biggest concerns are the third issue… where the rig does not boot into the GUI (or connected monitor) with more than 6 GPUs, and the fourth issue (Invalid PCI ROM header signature error).

I would appreciate prompt help, seeing as I am setting up a large scale mining facility and I have a deadline to finish everything. If I do not receive adequate help, I will have no option but to move to SMOS or minerstat.

Thank you.